Respan

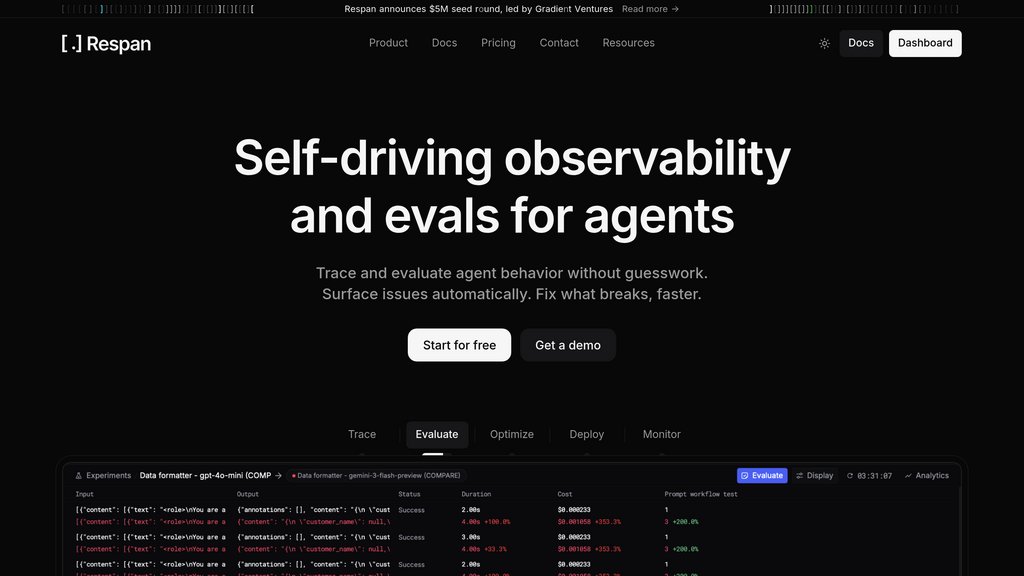

Proactive observability, evaluation, and gateway platform that helps engineering teams trace, debug, and continuously improve AI agents in production.

Community:

Product Overview

What is Respan?

Respan (formerly Keywords AI) is a unified control plane for AI agent observability and evaluation. It captures full execution traces across every LLM call, tool invocation, routing decision, and memory state — giving teams complete visibility into how their agents actually behave in production. Beyond visibility, Respan closes the loop: it runs automated workflow-level evaluations, surfaces root causes, recommends fixes, and lets teams ship prompt and model changes directly from the platform. Backed by Y Combinator and Gradient, Respan serves teams processing millions of LLM calls per hour, and is compliant with ISO 27001, SOC 2, GDPR, and HIPAA.

Key Features

End-to-End Tracing

Captures 100% of production requests with full span-level detail — inputs, outputs, tool calls, routing decisions, latency, cost, and custom metadata — reconstructed automatically into complete execution trees for multi-step agents.

Automated Evaluation Workflows

Combines code-based checks, human review, and LLM judges in a single evaluation pipeline triggered automatically when prompts, models, or agent behavior changes — no separate tooling required.

AI Evaluation Agent

A first-of-its-kind agent that analyzes failures across trials, localizes root causes to specific decisions, recommends which evals to add next, and intelligently samples live traffic for review.

Prompt & Model Deployment

Version, test, and promote prompts and model changes straight from the UI into production, with rollback support and A/B comparison against prior versions using the same production data.

Adaptive AI Gateway

Single gateway providing access to 500+ models with flexible routing, provider abstraction, BYOK support, and automatic failover — without rebuilding infrastructure.

Real-Time Monitoring & Alerting

Custom dashboards with 80+ graph types track quality, latency, cost, and error rates. Alerts fire via Slack, email, or text when behavior drifts or breaks, with automations to trigger follow-up evals or dataset builds.

Use Cases

- AI Agent Debugging : Engineering teams can open any production trace directly in a replay playground to reproduce failures, test fixes, and confirm resolution without guesswork.

- Regression Protection : Teams shipping frequent prompt or model updates use Respan to run evaluations against historical baselines before every release, catching quality regressions before users are affected.

- Large-Scale LLM Monitoring : Companies processing millions of LLM calls per hour — like voice AI platforms — use Respan's async logging and thread grouping to maintain full visibility across agents, languages, and use cases at scale.

- Dataset Curation from Production : Production traces are automatically converted into labeled evaluation datasets, eliminating the manual effort of building test sets and keeping evaluations grounded in real usage.

- Prompt Optimization : Teams use live production data streams to run automatic prompt engineering directly in the platform, continuously improving agent outputs without offline experimentation cycles.

FAQs

Respan Alternatives

Tropir

Developer platform for tracing, debugging, and automatically fixing complex LLM pipelines and agent workflows.

ClawHub

Public skill registry for OpenClaw agents, offering searchable, versioned skill bundles with simple CLI-based installation.

Langfuse

Open-source LLM engineering platform for collaborative debugging, analyzing, and iterating on large language model applications.

Trigger.dev

Open-source platform and SDK for building long-running, reliable background jobs and workflows with no timeouts and full observability.

EvoMap

Infrastructure platform for AI self-evolution, enabling agents to share, validate, and inherit capabilities across models and regions through the Genome Evolution Protocol (GEP).

FastMCP

Production-ready Python framework for building MCP (Model Context Protocol) servers that securely connect LLMs to tools, data, and APIs with minimal boilerplate.

Ona

Enterprise platform that lets autonomous software engineering agents build, test, and ship software inside secure, sandboxed cloud environments.

TrueFoundry

Enterprise-ready platform for deploying, governing, and scaling agentic AI workloads with a unified AI Gateway, comprehensive observability, and compliance-ready infrastructure.

Analytics of Respan Website

🇺🇸 US: 100%

Others: 0%