Scorecard

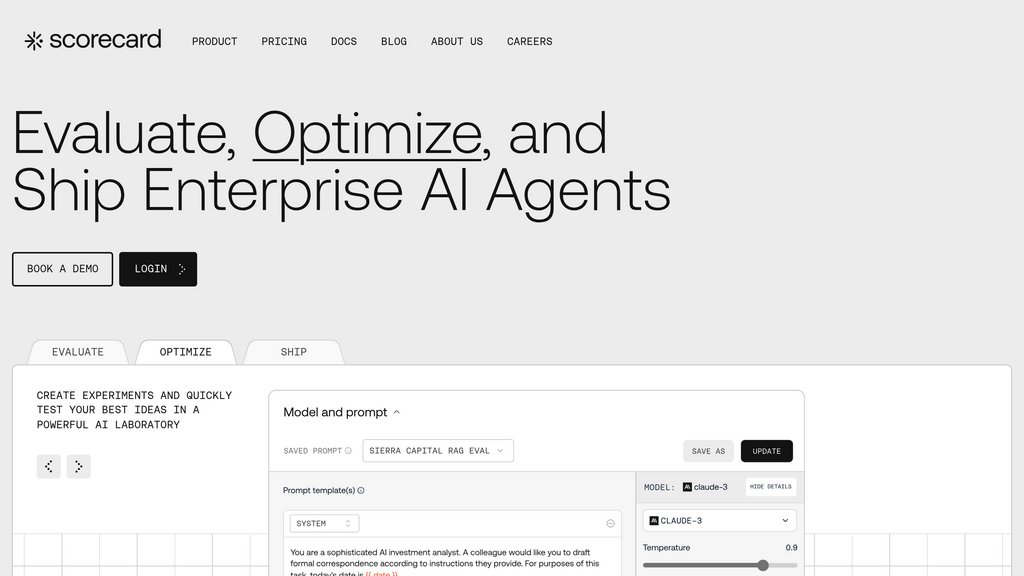

Comprehensive evaluation and observability platform for building reliable AI agents with systematic testing, continuous monitoring, and collaborative workflows.

Community:

Product Overview

What is Scorecard?

Scorecard is an enterprise-grade evaluation platform designed to help teams systematically test, evaluate, and optimize AI agents before and after production deployment. The platform addresses a critical gap in AI development by providing continuous evaluation capabilities that transform the unpredictable nature of AI systems into measurable, reliable outcomes. Rather than waiting weeks for feedback or relying on manual testing processes, Scorecard creates a fast feedback loop that enables teams to catch performance regressions early, validate improvements with confidence, and deploy AI agents that work reliably in real-world scenarios. It combines automated LLM-based evaluations, structured human feedback workflows, and real-time production monitoring to deliver a holistic view of AI agent performance.

Key Features

Testset Management and Scenario Mapping

Convert real production scenarios and edge cases into reusable test cases. Capture failures from production and automatically add them to regression test suites for continuous monitoring.

Domain-Specific Evaluation Metrics

Access pre-validated metrics for legal, financial services, healthcare, customer support, and general quality evaluation. Create custom evaluators tailored to specific business requirements and brand voice standards.

Multi-Turn Agent Testing

Systematically test complex agentic workflows, conversational agents, and multi-step AI systems. Support for tool-calling agents, RAG pipelines, and agent APIs without requiring code changes.

Live Observability and Continuous Monitoring

Real-time visibility into how users interact with AI agents through continuous evaluation. Automatically identify failures, performance regressions, and optimization opportunities across production traffic.

Collaborative Workflows and Cross-Functional Access

Centralized dashboard enabling AI engineers, product managers, QA teams, and subject matter experts to collaborate on evaluation design and performance validation without code expertise.

Framework Integration and CI/CD Pipeline Support

One-liner integrations with LangChain, LlamaIndex, CrewAI, OpenAI SDK, and Vercel AI SDK. Seamless integration into existing development workflows and automated testing pipelines.

Use Cases

- Pre-Production Testing and Quality Assurance : AI teams can run comprehensive evaluation suites across different prompts, models, and configurations to validate performance before deploying agents to production environments.

- Production Monitoring and Regression Detection : Continuously monitor AI agent behavior against real user interactions, detect performance regressions from model or prompt updates, and prevent quality issues from impacting users at scale.

- Prompt and Model Optimization : Compare different prompts and models side-by-side through the playground interface to identify the best-performing approaches, fine-tune behavior, and validate improvements with structured metrics.

- Enterprise AI Governance and Risk Management : Leadership and compliance teams gain visibility into AI reliability, safety, fairness, and brand alignment through comprehensive dashboards and automated alerting for performance issues.

- Reinforcement Learning from Human Feedback (RLHF) : Generate high-quality training datasets from evaluation results and human preferences. Use structured feedback loops to improve agent behavior through fine-tuning and continuous training cycles.

- Cross-Functional AI Quality Review : Product managers, subject matter experts, and domain specialists collaborate to validate that AI agent behavior matches user expectations and business requirements through intuitive evaluation interfaces.

FAQs

Scorecard Alternatives

Bluejay

Automated voice agent testing platform that simulates real-world conversations, environments, and behaviors to ensure performance, safety, and reliability.

TestDino

Smart test reporting and analytics platform for Playwright that classifies test failures, detects flakiness, and transforms debugging into actionable insights.

MAIHEM.ai

Enterprise-grade AI quality control platform offering automated testing, monitoring, and red-teaming for AI workflows at scale.

Gatling

All-in-one load testing platform designed for developers and teams to simulate real-world traffic, identify performance bottlenecks, and optimize application performance at scale.

Devzery

AI-powered API testing platform that streamlines regression, integration, and load testing within CI/CD workflows, ensuring reliable and bug-free software releases.

Beagle Security

AI-driven automated penetration testing platform for web applications, APIs, and GraphQL endpoints with comprehensive vulnerability detection and actionable remediation insights.

Userbrain

Unmoderated remote user testing platform streamlining UX research through a global tester pool and automated analysis tools.

CodeAnt AI

AI-powered code review platform that detects, auto-fixes code quality issues and security vulnerabilities across 30+ languages with seamless integration.

Analytics of Scorecard Website

🇺🇸 US: 35.16%

🇵🇰 PK: 9.84%

🇧🇷 BR: 9.79%

🇩🇪 DE: 9.1%

🇬🇧 GB: 8.03%

Others: 28.08%